How to add chat history

This guide previously used the RunnableWithMessageHistory abstraction. You can access this version of the documentation in the v0.2 docs.

As of the v0.3 release of LangChain, we recommend that LangChain users take advantage of LangGraph persistence to incorporate memory into new LangChain applications.

If your code is already relying on RunnableWithMessageHistory or BaseChatMessageHistory, you do not need to make any changes. We do not plan on deprecating this functionality in the near future as it works for simple chat applications and any code that uses RunnableWithMessageHistory will continue to work as expected.

Please see How to migrate to LangGraph Memory for more details.

In many Q&A applications we want to allow the user to have a back-and-forth conversation, meaning the application needs some sort of "memory" of past questions and answers, and some logic for incorporating those into its current thinking.

In this guide we focus on adding logic for incorporating historical messages.

This is largely a condensed version of the Conversational RAG tutorial.

We will cover two approaches:

- Chains, in which we always execute a retrieval step;

- Agents, in which we give an LLM discretion over whether and how to execute a retrieval step (or multiple steps).

For the external knowledge source, we will use the same LLM Powered Autonomous Agents blog post by Lilian Weng from the RAG tutorial.

Setup

Dependencies

We'll use OpenAI embeddings and an InMemory vector store in this walkthrough, but everything shown here works with any Embeddings, and VectorStore or Retriever.

We'll use the following packages:

%%capture --no-stderr

%pip install --upgrade --quiet langchain langchain-community beautifulsoup4

We need to set environment variable OPENAI_API_KEY, which can be done directly or loaded from a .env file like so:

import getpass

import os

if not os.environ.get("OPENAI_API_KEY"):

os.environ["OPENAI_API_KEY"] = getpass.getpass()

LangSmith

Many of the applications you build with LangChain will contain multiple steps with multiple invocations of LLM calls. As these applications get more and more complex, it becomes crucial to be able to inspect what exactly is going on inside your chain or agent. The best way to do this is with LangSmith.

Note that LangSmith is not needed, but it is helpful. If you do want to use LangSmith, after you sign up at the link above, make sure to set your environment variables to start logging traces:

os.environ["LANGCHAIN_TRACING_V2"] = "true"

if not os.environ.get("LANGCHAIN_API_KEY"):

os.environ["LANGCHAIN_API_KEY"] = getpass.getpass()

Chains

In a conversational RAG application, queries issued to the retriever should be informed by the context of the conversation. LangChain provides a create_history_aware_retriever constructor to simplify this. It constructs a chain that accepts keys input and chat_history as input, and has the same output schema as a retriever. create_history_aware_retriever requires as inputs:

- LLM;

- Retriever;

- Prompt.

First we obtain these objects:

LLM

We can use any supported chat model:

- OpenAI

- Anthropic

- Azure

- Cohere

- NVIDIA

- FireworksAI

- Groq

- MistralAI

- TogetherAI

pip install -qU langchain-openai

import getpass

import os

os.environ["OPENAI_API_KEY"] = getpass.getpass()

from langchain_openai import ChatOpenAI

llm = ChatOpenAI(model="gpt-4o-mini")

pip install -qU langchain-anthropic

import getpass

import os

os.environ["ANTHROPIC_API_KEY"] = getpass.getpass()

from langchain_anthropic import ChatAnthropic

llm = ChatAnthropic(model="claude-3-5-sonnet-20240620")

pip install -qU langchain-openai

import getpass

import os

os.environ["AZURE_OPENAI_API_KEY"] = getpass.getpass()

from langchain_openai import AzureChatOpenAI

llm = AzureChatOpenAI(

azure_endpoint=os.environ["AZURE_OPENAI_ENDPOINT"],

azure_deployment=os.environ["AZURE_OPENAI_DEPLOYMENT_NAME"],

openai_api_version=os.environ["AZURE_OPENAI_API_VERSION"],

)

pip install -qU langchain-google-vertexai

import getpass

import os

os.environ["GOOGLE_API_KEY"] = getpass.getpass()

from langchain_google_vertexai import ChatVertexAI

llm = ChatVertexAI(model="gemini-1.5-flash")

pip install -qU langchain-cohere

import getpass

import os

os.environ["COHERE_API_KEY"] = getpass.getpass()

from langchain_cohere import ChatCohere

llm = ChatCohere(model="command-r-plus")

pip install -qU langchain-nvidia-ai-endpoints

import getpass

import os

os.environ["NVIDIA_API_KEY"] = getpass.getpass()

from langchain import ChatNVIDIA

llm = ChatNVIDIA(model="meta/llama3-70b-instruct")

pip install -qU langchain-fireworks

import getpass

import os

os.environ["FIREWORKS_API_KEY"] = getpass.getpass()

from langchain_fireworks import ChatFireworks

llm = ChatFireworks(model="accounts/fireworks/models/llama-v3p1-70b-instruct")

pip install -qU langchain-groq

import getpass

import os

os.environ["GROQ_API_KEY"] = getpass.getpass()

from langchain_groq import ChatGroq

llm = ChatGroq(model="llama3-8b-8192")

pip install -qU langchain-mistralai

import getpass

import os

os.environ["MISTRAL_API_KEY"] = getpass.getpass()

from langchain_mistralai import ChatMistralAI

llm = ChatMistralAI(model="mistral-large-latest")

pip install -qU langchain-openai

import getpass

import os

os.environ["TOGETHER_API_KEY"] = getpass.getpass()

from langchain_openai import ChatOpenAI

llm = ChatOpenAI(

base_url="https://api.together.xyz/v1",

api_key=os.environ["TOGETHER_API_KEY"],

model="mistralai/Mixtral-8x7B-Instruct-v0.1",

)

Retriever

For the retriever, we will use WebBaseLoader to load the content of a web page. Here we instantiate a InMemoryVectorStore vectorstore and then use its .as_retriever method to build a retriever that can be incorporated into LCEL chains.

import bs4

from langchain.chains import create_retrieval_chain

from langchain.chains.combine_documents import create_stuff_documents_chain

from langchain_community.document_loaders import WebBaseLoader

from langchain_core.output_parsers import StrOutputParser

from langchain_core.prompts import ChatPromptTemplate

from langchain_core.runnables import RunnablePassthrough

from langchain_core.vectorstores import InMemoryVectorStore

from langchain_openai import OpenAIEmbeddings

from langchain_text_splitters import RecursiveCharacterTextSplitter

loader = WebBaseLoader(

web_paths=("https://lilianweng.github.io/posts/2023-06-23-agent/",),

bs_kwargs=dict(

parse_only=bs4.SoupStrainer(

class_=("post-content", "post-title", "post-header")

)

),

)

docs = loader.load()

text_splitter = RecursiveCharacterTextSplitter(chunk_size=1000, chunk_overlap=200)

splits = text_splitter.split_documents(docs)

vectorstore = InMemoryVectorStore(embedding=OpenAIEmbeddings())

vectorstore.add_documents(splits)

retriever = vectorstore.as_retriever()

USER_AGENT environment variable not set, consider setting it to identify your requests.

Prompt

We'll use a prompt that includes a MessagesPlaceholder variable under the name "chat_history". This allows us to pass in a list of Messages to the prompt using the "chat_history" input key, and these messages will be inserted after the system message and before the human message containing the latest question.

from langchain.chains import create_history_aware_retriever

from langchain_core.prompts import MessagesPlaceholder

contextualize_q_system_prompt = (

"Given a chat history and the latest user question "

"which might reference context in the chat history, "

"formulate a standalone question which can be understood "

"without the chat history. Do NOT answer the question, "

"just reformulate it if needed and otherwise return it as is."

)

contextualize_q_prompt = ChatPromptTemplate.from_messages(

[

("system", contextualize_q_system_prompt),

MessagesPlaceholder("chat_history"),

("human", "{input}"),

]

)

Assembling the chain

We can then instantiate the history-aware retriever:

history_aware_retriever = create_history_aware_retriever(

llm, retriever, contextualize_q_prompt

)

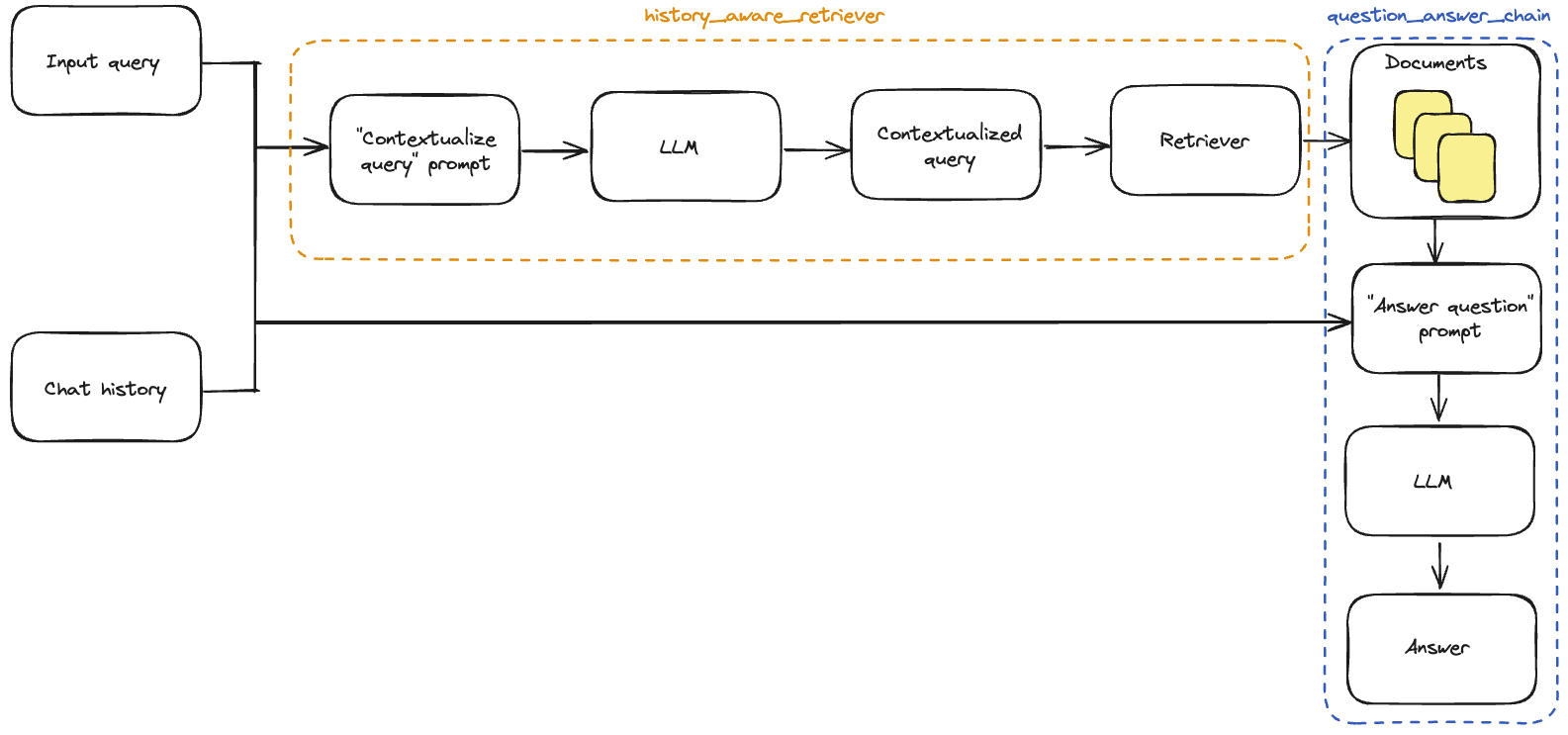

This chain prepends a rephrasing of the input query to our retriever, so that the retrieval incorporates the context of the conversation.

Now we can build our full QA chain.

As in the RAG tutorial, we will use create_stuff_documents_chain to generate a question_answer_chain, with input keys context, chat_history, and input-- it accepts the retrieved context alongside the conversation history and query to generate an answer.

We build our final rag_chain with create_retrieval_chain. This chain applies the history_aware_retriever and question_answer_chain in sequence, retaining intermediate outputs such as the retrieved context for convenience. It has input keys input and chat_history, and includes input, chat_history, context, and answer in its output.

system_prompt = (

"You are an assistant for question-answering tasks. "

"Use the following pieces of retrieved context to answer "

"the question. If you don't know the answer, say that you "

"don't know. Use three sentences maximum and keep the "

"answer concise."

"\n\n"

"{context}"

)

qa_prompt = ChatPromptTemplate.from_messages(

[

("system", system_prompt),

MessagesPlaceholder("chat_history"),

("human", "{input}"),

]

)

question_answer_chain = create_stuff_documents_chain(llm, qa_prompt)

rag_chain = create_retrieval_chain(history_aware_retriever, question_answer_chain)

Stateful Management of chat history

We have added application logic for incorporating chat history, but we are still manually plumbing it through our application. In production, the Q&A application we usually persist the chat history into a database, and be able to read and update it appropriately.

LangGraph implements a built-in persistence layer, making it ideal for chat applications that support multiple conversational turns.

Wrapping our chat model in a minimal LangGraph application allows us to automatically persist the message history, simplifying the development of multi-turn applications.

LangGraph comes with a simple in-memory checkpointer, which we use below. See its documentation for more detail, including how to use different persistence backends (e.g., SQLite or Postgres).

For a detailed walkthrough of how to manage message history, head to the How to add message history (memory) guide.

from typing import Sequence

from langchain_core.messages import AIMessage, BaseMessage, HumanMessage

from langgraph.checkpoint.memory import MemorySaver

from langgraph.graph import START, StateGraph

from langgraph.graph.message import add_messages

from typing_extensions import Annotated, TypedDict

# We define a dict representing the state of the application.

# This state has the same input and output keys as `rag_chain`.

class State(TypedDict):

input: str

chat_history: Annotated[Sequence[BaseMessage], add_messages]

context: str

answer: str

# We then define a simple node that runs the `rag_chain`.

# The `return` values of the node update the graph state, so here we just

# update the chat history with the input message and response.

def call_model(state: State):

response = rag_chain.invoke(state)

return {

"chat_history": [

HumanMessage(state["input"]),

AIMessage(response["answer"]),

],

"context": response["context"],

"answer": response["answer"],

}

# Our graph consists only of one node:

workflow = StateGraph(state_schema=State)

workflow.add_edge(START, "model")

workflow.add_node("model", call_model)

# Finally, we compile the graph with a checkpointer object.

# This persists the state, in this case in memory.

memory = MemorySaver()

app = workflow.compile(checkpointer=memory)

config = {"configurable": {"thread_id": "abc123"}}

result = app.invoke(

{"input": "What is Task Decomposition?"},

config=config,

)

print(result["answer"])

Task decomposition is a technique used to break down complex tasks into smaller and simpler steps. This process helps agents or models tackle difficult tasks by dividing them into more manageable subtasks. Task decomposition can be achieved through methods like Chain of Thought (CoT) or Tree of Thoughts, which guide the agent in thinking step by step or exploring multiple reasoning possibilities at each step.

result = app.invoke(

{"input": "What is one way of doing it?"},

config=config,

)

print(result["answer"])

One way of task decomposition is by using Large Language Models (LLMs) with simple prompting, such as providing instructions like "Steps for XYZ" or asking about subgoals for achieving a specific task. This method leverages the power of LLMs to break down tasks into smaller components for easier handling. Additionally, task decomposition can also be done using task-specific instructions tailored to the nature of the task, like requesting a story outline for writing a novel.

The conversation history can be inspected via the state of the application:

chat_history = app.get_state(config).values["chat_history"]

for message in chat_history:

message.pretty_print()

================================[1m Human Message [0m=================================

What is Task Decomposition?

==================================[1m Ai Message [0m==================================

Task decomposition is a technique used to break down complex tasks into smaller and simpler steps. This process helps agents or models tackle difficult tasks by dividing them into more manageable subtasks. Task decomposition can be achieved through methods like Chain of Thought (CoT) or Tree of Thoughts, which guide the agent in thinking step by step or exploring multiple reasoning possibilities at each step.

================================[1m Human Message [0m=================================

What is one way of doing it?

==================================[1m Ai Message [0m==================================

One way of task decomposition is by using Large Language Models (LLMs) with simple prompting, such as providing instructions like "Steps for XYZ" or asking about subgoals for achieving a specific task. This method leverages the power of LLMs to break down tasks into smaller components for easier handling. Additionally, task decomposition can also be done using task-specific instructions tailored to the nature of the task, like requesting a story outline for writing a novel.

Tying it together

For convenience, we tie together all of the necessary steps in a single code cell:

from typing import Sequence

import bs4

from langchain.chains import create_history_aware_retriever, create_retrieval_chain

from langchain.chains.combine_documents import create_stuff_documents_chain

from langchain_community.document_loaders import WebBaseLoader

from langchain_core.messages import AIMessage, BaseMessage, HumanMessage

from langchain_core.prompts import ChatPromptTemplate, MessagesPlaceholder

from langchain_core.runnables.history import RunnableWithMessageHistory

from langchain_core.vectorstores import InMemoryVectorStore

from langchain_openai import ChatOpenAI, OpenAIEmbeddings

from langchain_text_splitters import RecursiveCharacterTextSplitter

from langgraph.checkpoint.memory import MemorySaver

from langgraph.graph import START, StateGraph

from langgraph.graph.message import add_messages

from typing_extensions import Annotated, TypedDict

llm = ChatOpenAI(model="gpt-3.5-turbo", temperature=0)

### Construct retriever ###

loader = WebBaseLoader(

web_paths=("https://lilianweng.github.io/posts/2023-06-23-agent/",),

bs_kwargs=dict(

parse_only=bs4.SoupStrainer(

class_=("post-content", "post-title", "post-header")

)

),

)

docs = loader.load()

text_splitter = RecursiveCharacterTextSplitter(chunk_size=1000, chunk_overlap=200)

splits = text_splitter.split_documents(docs)

vectorstore = InMemoryVectorStore(embedding=OpenAIEmbeddings())

vectorstore.add_documents(documents=splits)

retriever = vectorstore.as_retriever()

### Contextualize question ###

contextualize_q_system_prompt = (

"Given a chat history and the latest user question "

"which might reference context in the chat history, "

"formulate a standalone question which can be understood "

"without the chat history. Do NOT answer the question, "

"just reformulate it if needed and otherwise return it as is."

)

contextualize_q_prompt = ChatPromptTemplate.from_messages(

[

("system", contextualize_q_system_prompt),

MessagesPlaceholder("chat_history"),

("human", "{input}"),

]

)

history_aware_retriever = create_history_aware_retriever(

llm, retriever, contextualize_q_prompt

)

### Answer question ###

system_prompt = (

"You are an assistant for question-answering tasks. "

"Use the following pieces of retrieved context to answer "

"the question. If you don't know the answer, say that you "

"don't know. Use three sentences maximum and keep the "

"answer concise."

"\n\n"

"{context}"

)

qa_prompt = ChatPromptTemplate.from_messages(

[

("system", system_prompt),

MessagesPlaceholder("chat_history"),

("human", "{input}"),

]

)

question_answer_chain = create_stuff_documents_chain(llm, qa_prompt)

rag_chain = create_retrieval_chain(history_aware_retriever, question_answer_chain)

### Statefully manage chat history ###

# We define a dict representing the state of the application.

# This state has the same input and output keys as `rag_chain`.

class State(TypedDict):

input: str

chat_history: Annotated[Sequence[BaseMessage], add_messages]

context: str

answer: str

# We then define a simple node that runs the `rag_chain`.

# The `return` values of the node update the graph state, so here we just

# update the chat history with the input message and response.

def call_model(state: State):

response = rag_chain.invoke(state)

return {

"chat_history": [

HumanMessage(state["input"]),

AIMessage(response["answer"]),

],

"context": response["context"],

"answer": response["answer"],

}

# Our graph consists only of one node:

workflow = StateGraph(state_schema=State)

workflow.add_edge(START, "model")

workflow.add_node("model", call_model)

# Finally, we compile the graph with a checkpointer object.

# This persists the state, in this case in memory.

memory = MemorySaver()

app = workflow.compile(checkpointer=memory)

config = {"configurable": {"thread_id": "abc123"}}

result = app.invoke(

{"input": "What is Task Decomposition?"},

config=config,

)

print(result["answer"])

Task decomposition is a technique used to break down complex tasks into smaller and simpler steps. This process helps agents or models handle difficult tasks by dividing them into more manageable subtasks. Different methods like Chain of Thought and Tree of Thoughts are used to decompose tasks into multiple steps, enhancing performance and aiding in the interpretation of the thinking process.

result = app.invoke(

{"input": "What is one way of doing it?"},

config=config,

)

print(result["answer"])

One way of task decomposition is by using Large Language Models (LLMs) with simple prompting, such as providing instructions like "Steps for XYZ" or asking about subgoals for achieving a specific task. This method leverages the power of LLMs to break down tasks into smaller components for easier handling and processing.

Agents

Agents leverage the reasoning capabilities of LLMs to make decisions during execution. Using agents allow you to offload some discretion over the retrieval process. Although their behavior is less predictable than chains, they offer some advantages in this context:

- Agents generate the input to the retriever directly, without necessarily needing us to explicitly build in contextualization, as we did above;

- Agents can execute multiple retrieval steps in service of a query, or refrain from executing a retrieval step altogether (e.g., in response to a generic greeting from a user).

Retrieval tool

Agents can access "tools" and manage their execution. In this case, we will convert our retriever into a LangChain tool to be wielded by the agent:

from langchain.tools.retriever import create_retriever_tool

tool = create_retriever_tool(

retriever,

"blog_post_retriever",

"Searches and returns excerpts from the Autonomous Agents blog post.",

)

tools = [tool]

Agent constructor

Now that we have defined the tools and the LLM, we can create the agent. We will be using LangGraph to construct the agent. Currently we are using a high level interface to construct the agent, but the nice thing about LangGraph is that this high-level interface is backed by a low-level, highly controllable API in case you want to modify the agent logic.

from langgraph.prebuilt import create_react_agent

agent_executor = create_react_agent(llm, tools)

We can now try it out. Note that so far it is not stateful (we still need to add in memory)

from langchain_core.messages import HumanMessage

query = "What is Task Decomposition?"

for s in agent_executor.stream(

{"messages": [HumanMessage(content=query)]},

):

print(s)

print("----")

{'agent': {'messages': [AIMessage(content='Task decomposition is a problem-solving strategy that involves breaking down a complex task or problem into smaller, more manageable subtasks. By decomposing a task into smaller components, it becomes easier to understand, analyze, and solve the overall problem. This approach allows individuals to focus on one specific aspect of the task at a time, leading to a more systematic and organized problem-solving process. Task decomposition is commonly used in various fields such as project management, software development, and engineering to simplify complex tasks and improve efficiency.', additional_kwargs={'refusal': None}, response_metadata={'token_usage': {'completion_tokens': 102, 'prompt_tokens': 68, 'total_tokens': 170, 'completion_tokens_details': {'reasoning_tokens': 0}}, 'model_name': 'gpt-3.5-turbo-0125', 'system_fingerprint': None, 'finish_reason': 'stop', 'logprobs': None}, id='run-a0925ffd-f500-4677-a108-c7015987e9ae-0', usage_metadata={'input_tokens': 68, 'output_tokens': 102, 'total_tokens': 170})]}}

----

LangGraph comes with built in persistence, so we don't need to use ChatMessageHistory! Rather, we can pass in a checkpointer to our LangGraph agent directly.

Distinct conversations are managed by specifying a key for a conversation thread in the config dict, as shown below.

from langgraph.checkpoint.memory import MemorySaver

memory = MemorySaver()

agent_executor = create_react_agent(llm, tools, checkpointer=memory)

This is all we need to construct a conversational RAG agent.

Let's observe its behavior. Note that if we input a query that does not require a retrieval step, the agent does not execute one:

config = {"configurable": {"thread_id": "abc123"}}

for s in agent_executor.stream(

{"messages": [HumanMessage(content="Hi! I'm bob")]}, config=config

):

print(s)

print("----")

{'agent': {'messages': [AIMessage(content='Hello Bob! How can I assist you today?', additional_kwargs={'refusal': None}, response_metadata={'token_usage': {'completion_tokens': 11, 'prompt_tokens': 67, 'total_tokens': 78, 'completion_tokens_details': {'reasoning_tokens': 0}}, 'model_name': 'gpt-3.5-turbo-0125', 'system_fingerprint': None, 'finish_reason': 'stop', 'logprobs': None}, id='run-d9011a17-9dbb-4348-9a58-ff89419a4bca-0', usage_metadata={'input_tokens': 67, 'output_tokens': 11, 'total_tokens': 78})]}}

----

Further, if we input a query that does require a retrieval step, the agent generates the input to the tool:

query = "What is Task Decomposition?"

for s in agent_executor.stream(

{"messages": [HumanMessage(content=query)]}, config=config

):

print(s)

print("----")

{'agent': {'messages': [AIMessage(content='', additional_kwargs={'tool_calls': [{'id': 'call_qVHvDTfYmWqcbgVhTwsH03aJ', 'function': {'arguments': '{"query":"Task Decomposition"}', 'name': 'blog_post_retriever'}, 'type': 'function'}], 'refusal': None}, response_metadata={'token_usage': {'completion_tokens': 19, 'prompt_tokens': 91, 'total_tokens': 110, 'completion_tokens_details': {'reasoning_tokens': 0}}, 'model_name': 'gpt-3.5-turbo-0125', 'system_fingerprint': None, 'finish_reason': 'tool_calls', 'logprobs': None}, id='run-bf9df2a6-ad56-43af-8d57-16f850accfd1-0', tool_calls=[{'name': 'blog_post_retriever', 'args': {'query': 'Task Decomposition'}, 'id': 'call_qVHvDTfYmWqcbgVhTwsH03aJ', 'type': 'tool_call'}], usage_metadata={'input_tokens': 91, 'output_tokens': 19, 'total_tokens': 110})]}}

----

{'tools': {'messages': [ToolMessage(content='Fig. 1. Overview of a LLM-powered autonomous agent system.\nComponent One: Planning#\nA complicated task usually involves many steps. An agent needs to know what they are and plan ahead.\nTask Decomposition#\nChain of thought (CoT; Wei et al. 2022) has become a standard prompting technique for enhancing model performance on complex tasks. The model is instructed to “think step by step” to utilize more test-time computation to decompose hard tasks into smaller and simpler steps. CoT transforms big tasks into multiple manageable tasks and shed lights into an interpretation of the model’s thinking process.\n\nTree of Thoughts (Yao et al. 2023) extends CoT by exploring multiple reasoning possibilities at each step. It first decomposes the problem into multiple thought steps and generates multiple thoughts per step, creating a tree structure. The search process can be BFS (breadth-first search) or DFS (depth-first search) with each state evaluated by a classifier (via a prompt) or majority vote.\nTask decomposition can be done (1) by LLM with simple prompting like "Steps for XYZ.\\n1.", "What are the subgoals for achieving XYZ?", (2) by using task-specific instructions; e.g. "Write a story outline." for writing a novel, or (3) with human inputs.\n\n(3) Task execution: Expert models execute on the specific tasks and log results.\nInstruction:\n\nWith the input and the inference results, the AI assistant needs to describe the process and results. The previous stages can be formed as - User Input: {{ User Input }}, Task Planning: {{ Tasks }}, Model Selection: {{ Model Assignment }}, Task Execution: {{ Predictions }}. You must first answer the user\'s request in a straightforward manner. Then describe the task process and show your analysis and model inference results to the user in the first person. If inference results contain a file path, must tell the user the complete file path.\n\nFig. 11. Illustration of how HuggingGPT works. (Image source: Shen et al. 2023)\nThe system comprises of 4 stages:\n(1) Task planning: LLM works as the brain and parses the user requests into multiple tasks. There are four attributes associated with each task: task type, ID, dependencies, and arguments. They use few-shot examples to guide LLM to do task parsing and planning.\nInstruction:', name='blog_post_retriever', id='742ab53d-6f34-4607-bde7-13f2d75e0055', tool_call_id='call_qVHvDTfYmWqcbgVhTwsH03aJ')]}}

----

{'agent': {'messages': [AIMessage(content='Task decomposition is a technique used in autonomous agent systems to break down complex tasks into smaller and simpler steps. This approach helps the agent to manage and execute tasks more effectively by dividing them into manageable subtasks. One common method for task decomposition is the Chain of Thought (CoT) technique, which prompts the model to think step by step and decompose hard tasks into smaller steps. Another extension of CoT is the Tree of Thoughts, which explores multiple reasoning possibilities at each step by creating a tree structure of thought steps.\n\nTask decomposition can be achieved through various methods, such as using language models with simple prompting, task-specific instructions, or human inputs. By breaking down tasks into smaller components, autonomous agents can plan and execute tasks more efficiently.\n\nIf you would like more detailed information or examples related to task decomposition, feel free to ask!', additional_kwargs={'refusal': None}, response_metadata={'token_usage': {'completion_tokens': 168, 'prompt_tokens': 611, 'total_tokens': 779, 'completion_tokens_details': {'reasoning_tokens': 0}}, 'model_name': 'gpt-3.5-turbo-0125', 'system_fingerprint': None, 'finish_reason': 'stop', 'logprobs': None}, id='run-0f51a1cf-ff0a-474a-93f5-acf54e0d8cd6-0', usage_metadata={'input_tokens': 611, 'output_tokens': 168, 'total_tokens': 779})]}}

----

Above, instead of inserting our query verbatim into the tool, the agent stripped unnecessary words like "what" and "is".

This same principle allows the agent to use the context of the conversation when necessary:

query = "What according to the blog post are common ways of doing it? redo the search"

for s in agent_executor.stream(

{"messages": [HumanMessage(content=query)]}, config=config

):

print(s)

print("----")

{'agent': {'messages': [AIMessage(content='', additional_kwargs={'tool_calls': [{'id': 'call_n7vUrFacrvl5wUGmz5EGpmCS', 'function': {'arguments': '{"query":"Common ways of task decomposition"}', 'name': 'blog_post_retriever'}, 'type': 'function'}], 'refusal': None}, response_metadata={'token_usage': {'completion_tokens': 21, 'prompt_tokens': 802, 'total_tokens': 823, 'completion_tokens_details': {'reasoning_tokens': 0}}, 'model_name': 'gpt-3.5-turbo-0125', 'system_fingerprint': None, 'finish_reason': 'tool_calls', 'logprobs': None}, id='run-4d949be3-00e5-49e5-af26-6a217efc8858-0', tool_calls=[{'name': 'blog_post_retriever', 'args': {'query': 'Common ways of task decomposition'}, 'id': 'call_n7vUrFacrvl5wUGmz5EGpmCS', 'type': 'tool_call'}], usage_metadata={'input_tokens': 802, 'output_tokens': 21, 'total_tokens': 823})]}}

----

{'tools': {'messages': [ToolMessage(content='Fig. 1. Overview of a LLM-powered autonomous agent system.\nComponent One: Planning#\nA complicated task usually involves many steps. An agent needs to know what they are and plan ahead.\nTask Decomposition#\nChain of thought (CoT; Wei et al. 2022) has become a standard prompting technique for enhancing model performance on complex tasks. The model is instructed to “think step by step” to utilize more test-time computation to decompose hard tasks into smaller and simpler steps. CoT transforms big tasks into multiple manageable tasks and shed lights into an interpretation of the model’s thinking process.\n\nTree of Thoughts (Yao et al. 2023) extends CoT by exploring multiple reasoning possibilities at each step. It first decomposes the problem into multiple thought steps and generates multiple thoughts per step, creating a tree structure. The search process can be BFS (breadth-first search) or DFS (depth-first search) with each state evaluated by a classifier (via a prompt) or majority vote.\nTask decomposition can be done (1) by LLM with simple prompting like "Steps for XYZ.\\n1.", "What are the subgoals for achieving XYZ?", (2) by using task-specific instructions; e.g. "Write a story outline." for writing a novel, or (3) with human inputs.\n\nResources:\n1. Internet access for searches and information gathering.\n2. Long Term memory management.\n3. GPT-3.5 powered Agents for delegation of simple tasks.\n4. File output.\n\nPerformance Evaluation:\n1. Continuously review and analyze your actions to ensure you are performing to the best of your abilities.\n2. Constructively self-criticize your big-picture behavior constantly.\n3. Reflect on past decisions and strategies to refine your approach.\n4. Every command has a cost, so be smart and efficient. Aim to complete tasks in the least number of steps.\n\n(3) Task execution: Expert models execute on the specific tasks and log results.\nInstruction:\n\nWith the input and the inference results, the AI assistant needs to describe the process and results. The previous stages can be formed as - User Input: {{ User Input }}, Task Planning: {{ Tasks }}, Model Selection: {{ Model Assignment }}, Task Execution: {{ Predictions }}. You must first answer the user\'s request in a straightforward manner. Then describe the task process and show your analysis and model inference results to the user in the first person. If inference results contain a file path, must tell the user the complete file path.', name='blog_post_retriever', id='90fcbc1e-0736-47bc-9a96-347ad837e0e3', tool_call_id='call_n7vUrFacrvl5wUGmz5EGpmCS')]}}

----

{'agent': {'messages': [AIMessage(content='According to the blog post, common ways of task decomposition include:\n\n1. Using Language Models (LLM) with Simple Prompting: Language models can be utilized with simple prompts like "Steps for XYZ" or "What are the subgoals for achieving XYZ?" to break down tasks into smaller steps.\n\n2. Task-Specific Instructions: Providing task-specific instructions to guide the decomposition process. For example, using instructions like "Write a story outline" for writing a novel can help in breaking down the task effectively.\n\n3. Human Inputs: Involving human inputs in the task decomposition process. Human insights and expertise can contribute to breaking down complex tasks into manageable subtasks.\n\nThese methods of task decomposition help autonomous agents in planning and executing tasks more efficiently by breaking them down into smaller and simpler components.', additional_kwargs={'refusal': None}, response_metadata={'token_usage': {'completion_tokens': 160, 'prompt_tokens': 1347, 'total_tokens': 1507, 'completion_tokens_details': {'reasoning_tokens': 0}}, 'model_name': 'gpt-3.5-turbo-0125', 'system_fingerprint': None, 'finish_reason': 'stop', 'logprobs': None}, id='run-087ce1b5-f897-40d0-8ef4-eb1c6852a835-0', usage_metadata={'input_tokens': 1347, 'output_tokens': 160, 'total_tokens': 1507})]}}

----

Note that the agent was able to infer that "it" in our query refers to "task decomposition", and generated a reasonable search query as a result-- in this case, "common ways of task decomposition".

Tying it together

For convenience, we tie together all of the necessary steps in a single code cell:

import bs4

from langchain.tools.retriever import create_retriever_tool

from langchain_community.document_loaders import WebBaseLoader

from langchain_core.vectorstores import InMemoryVectorStore

from langchain_openai import ChatOpenAI, OpenAIEmbeddings

from langchain_text_splitters import RecursiveCharacterTextSplitter

from langgraph.checkpoint.memory import MemorySaver

from langgraph.prebuilt import create_react_agent

memory = MemorySaver()

llm = ChatOpenAI(model="gpt-3.5-turbo", temperature=0)

### Construct retriever ###

loader = WebBaseLoader(

web_paths=("https://lilianweng.github.io/posts/2023-06-23-agent/",),

bs_kwargs=dict(

parse_only=bs4.SoupStrainer(

class_=("post-content", "post-title", "post-header")

)

),

)

docs = loader.load()

text_splitter = RecursiveCharacterTextSplitter(chunk_size=1000, chunk_overlap=200)

splits = text_splitter.split_documents(docs)

vectorstore = InMemoryVectorStore(embedding=OpenAIEmbeddings())

vectorstore.add_documents(documents=splits)

retriever = vectorstore.as_retriever()

### Build retriever tool ###

tool = create_retriever_tool(

retriever,

"blog_post_retriever",

"Searches and returns excerpts from the Autonomous Agents blog post.",

)

tools = [tool]

agent_executor = create_react_agent(llm, tools, checkpointer=memory)

USER_AGENT environment variable not set, consider setting it to identify your requests.

Next steps

We've covered the steps to build a basic conversational Q&A application:

- We used chains to build a predictable application that generates search queries for each user input;

- We used agents to build an application that "decides" when and how to generate search queries.

To explore different types of retrievers and retrieval strategies, visit the retrievers section of the how-to guides.

For a detailed walkthrough of LangChain's conversation memory abstractions, visit the How to add message history (memory) LCEL page.

To learn more about agents, head to the Agents Modules.